NVIDIA from another angle — looks invincible, but the cracks are showing.

Compute is high-conviction demand, and the metrics are extremely well-defined. Unlike Apple or Tesla — consumer products where the decision is multidimensional, with brand / emotion / ecosystem stickiness keeping customers “irrational” — large B2B customers are coldly rational: as soon as a better alternative or competitor appears, they’ll switch without hesitation, and at minimum cultivate a second supplier to preserve negotiating leverage. NVIDIA’s monopoly isn’t invincible: it’s only the sum of getting in early enough, scaling fast enough, and pulling the ecosystem far enough ahead. It’s a clear racetrack, and challengers will keep appearing — and the moment NVIDIA slows, it gets passed. This piece is built around four questions: 1) why challengers suddenly appeared → 2) where it’s happening → 3) what else is accelerating it → 4) when, and how to watch it.

§01 · Another angle — why NVDA isn’t invincible

Image: Wikimedia Commons / NVIDIA · Public Domain.

NVIDIA’s moat is real — CUDA, NVLink, HBM lock-in, CoWoS capacity, 25 years of ecosystem accretion — but the true substrate of every B2B story is customer rationality. This is the biggest difference between NVIDIA and Apple or Tesla. Apple sells 200 million iPhones a year to consumers, where emotion and brand drive most of the decision; same for Tesla. But the GPUs sold to OpenAI / Anthropic / Microsoft / Google / Meta / ByteDance are bought by some of the most coldly rational engineering teams on Earth — they look at tokens-per-dollar, tokens-per-watt, and total cost per training run. Logos don’t matter; brand storytelling doesn’t matter.

That means NVIDIA’s monopoly carries a built-in instability: the moment it slows, large customers will immediately cultivate a second supplier. Look at what’s already happened — Google built TPUs (and is now selling them externally), Amazon built Trainium, Microsoft built Maia, Meta built MTIA, OpenAI committed $20B to Cerebras and partnered with Broadcom on a bespoke inference chip. Every one of them is actively cultivating a second source. This isn’t a conspiracy theory; it’s B2B procurement 101.

| Metric | Value | Notes |

|---|---|---|

| FY26 data-center revenue | $215.9B | +89% YoY · Q4 $62.3B · ~89% of total revenue · top 4 CSPs combined are 61% of quarterly revenue (per NVIDIA Newsroom) |

| Non-GAAP gross margin | ~75% | Q4 75% · midpoint guide 75% · B200 gross margin per card ~84% (ASP $35–40K · build cost ~$6.4K) |

| AI accelerator share | 80–90% | Estimates vary widely · 2024 peak 87% · 2026 expected ~75% |

| Cost per training run | $50M–$500M | Frontier-model order of magnitude · failure cost is enormous → no one wants to risk immature hardware · the source of training as a fortress |

NVIDIA’s monopoly isn’t invincible: the moat is real, but it’s only the sum of “got in early + scaled fast + pulled the ecosystem ahead” — not a structural impossibility. The moment it slows, challengers swarm; customer rationality accelerates the process in reverse.

— the question to answer here isn’t “if,” it’s “when” and “how to watch”

§02 · Arch convergence — why challengers suddenly appeared

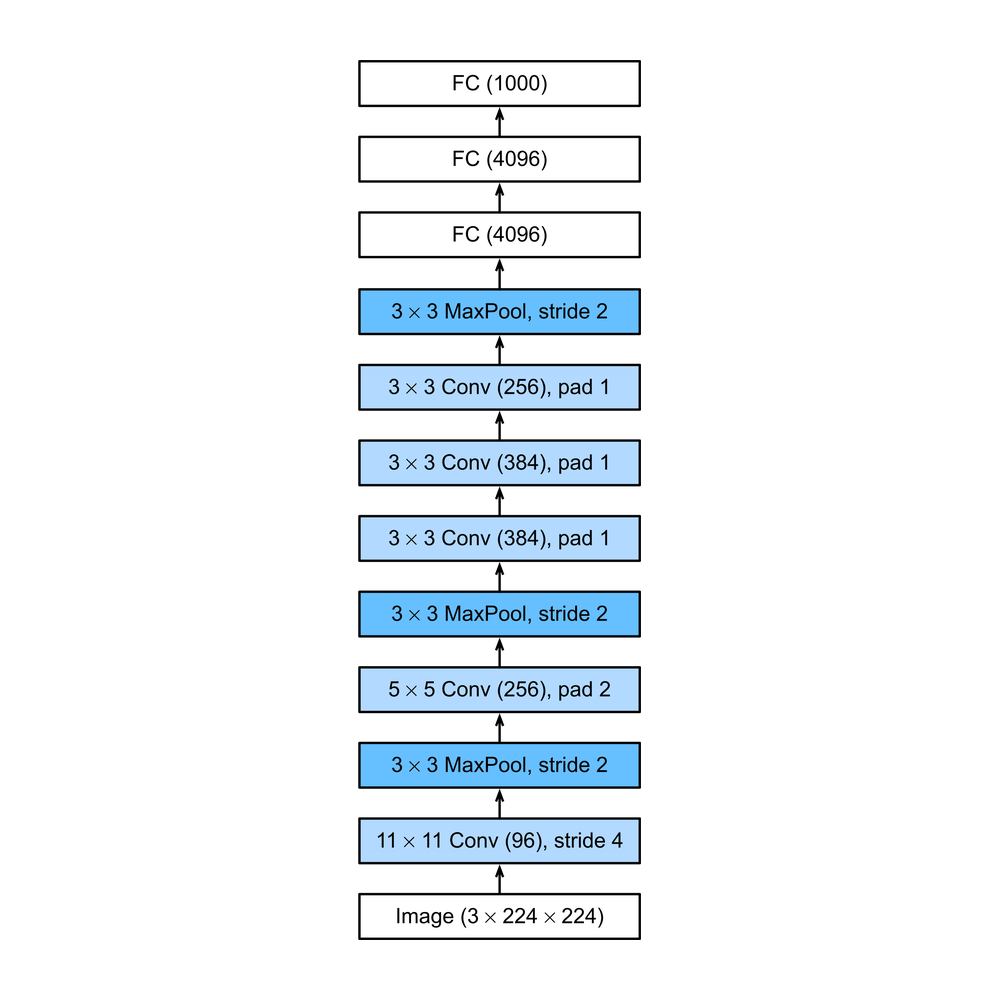

2012 · CNN debut

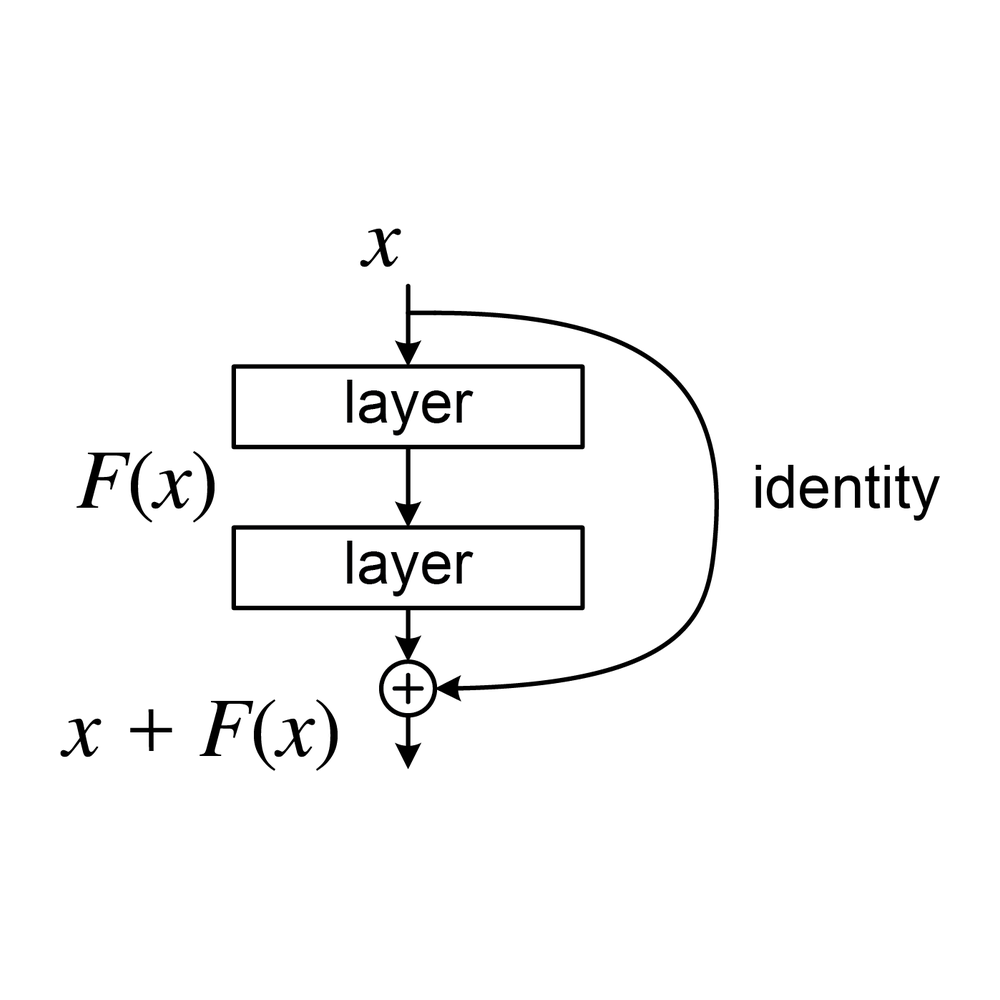

2015 · residual connection

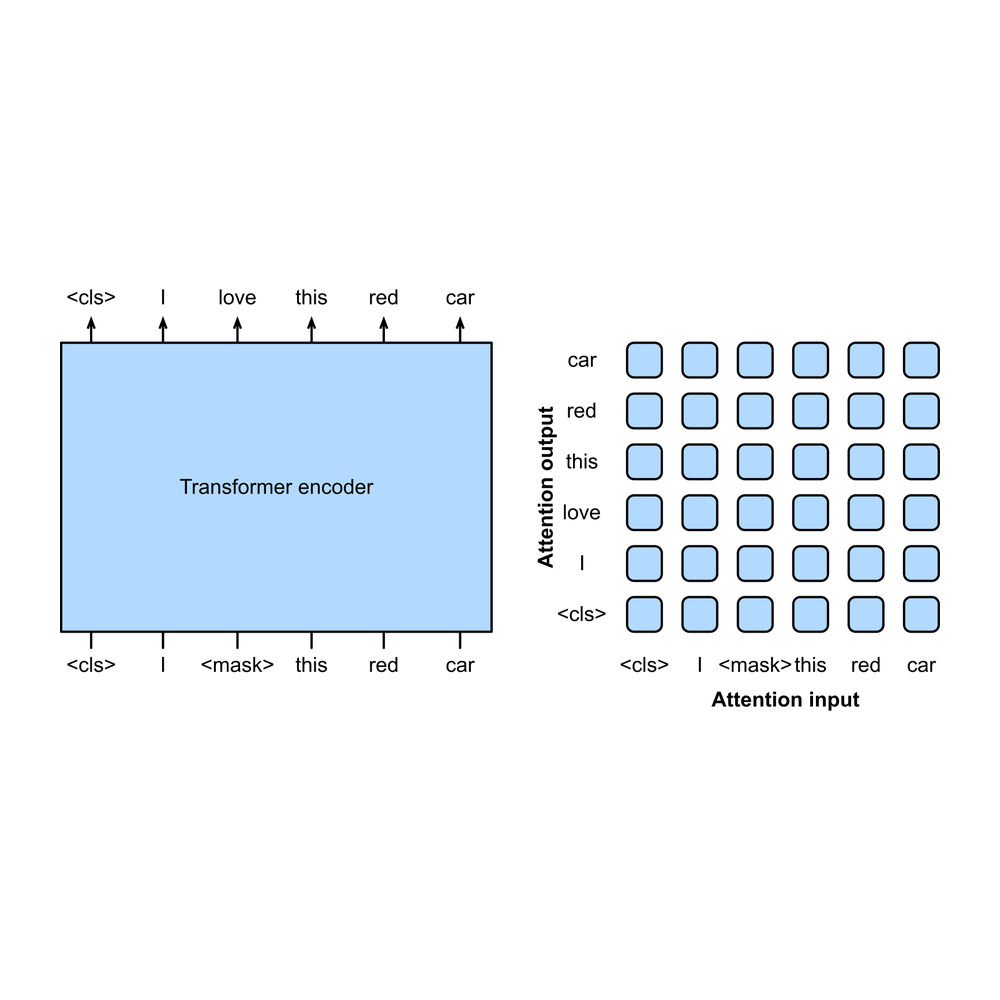

2018 · Encoder-only

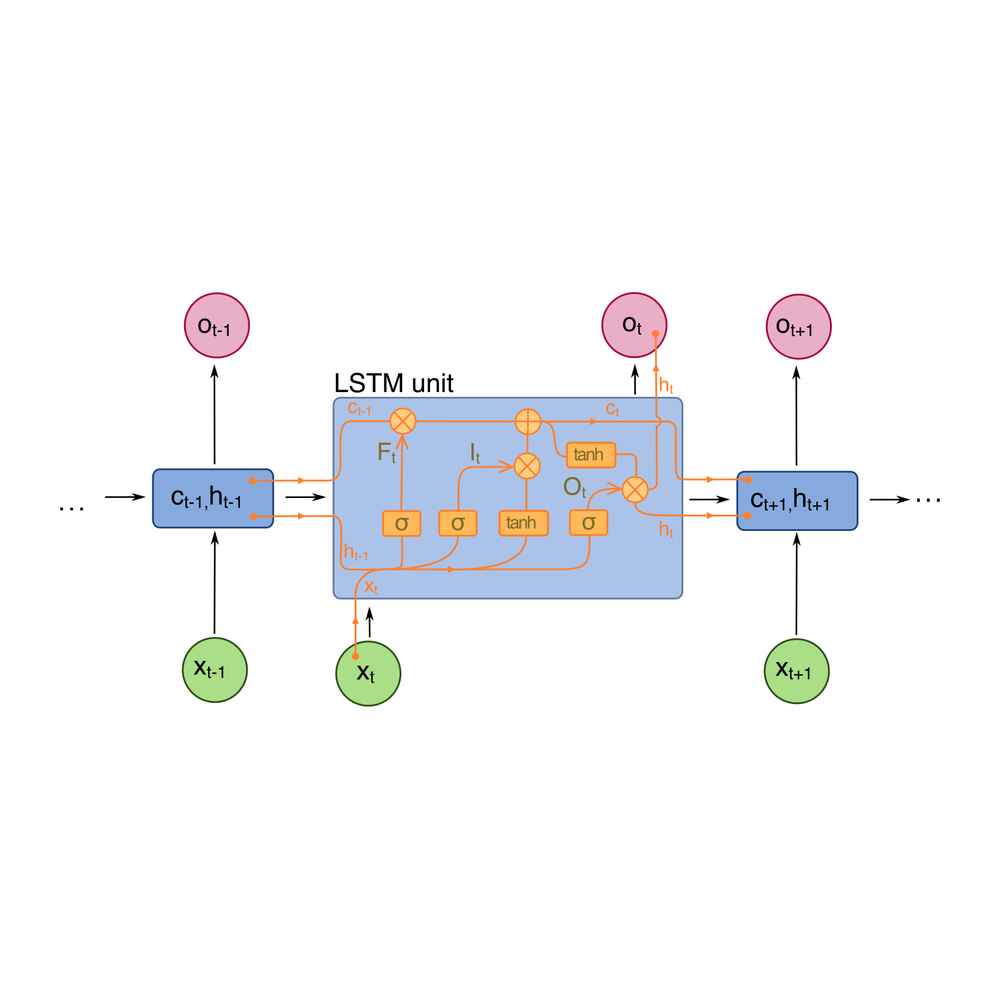

1997 · RNN / sequence

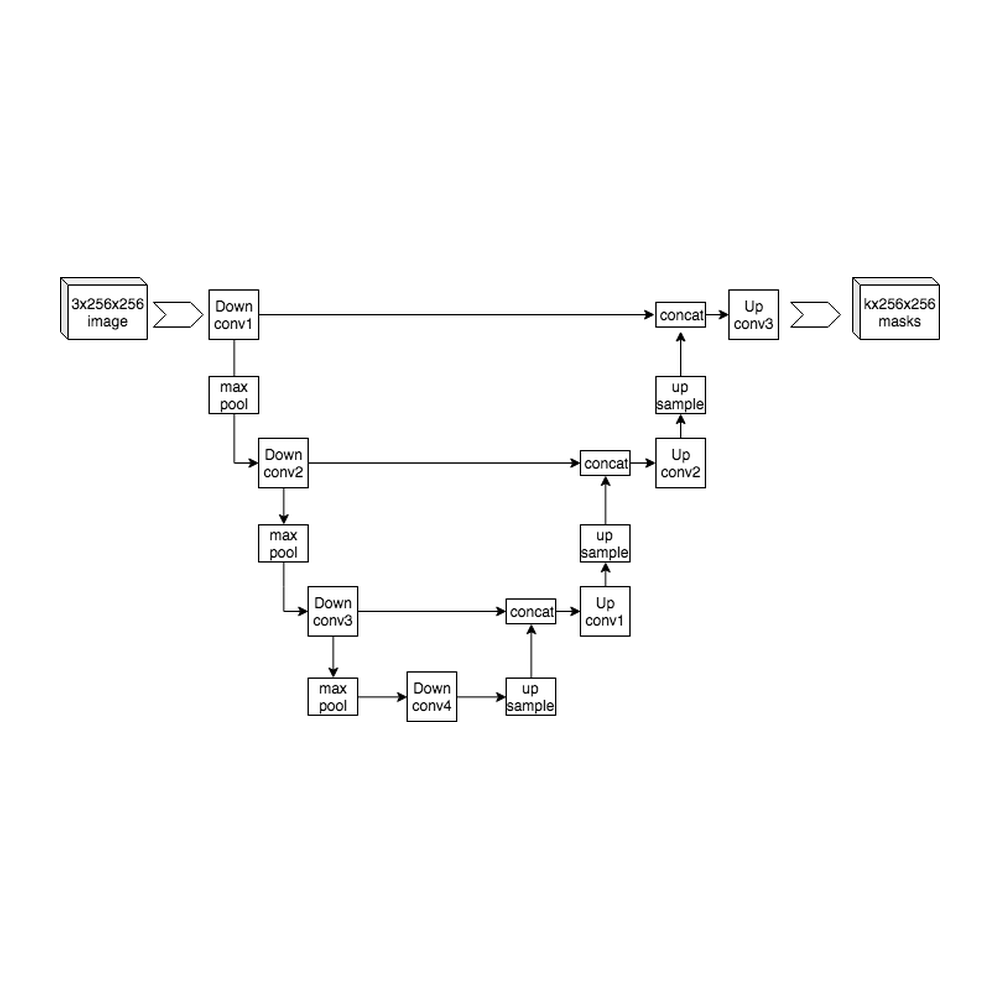

2015 · image segmentation

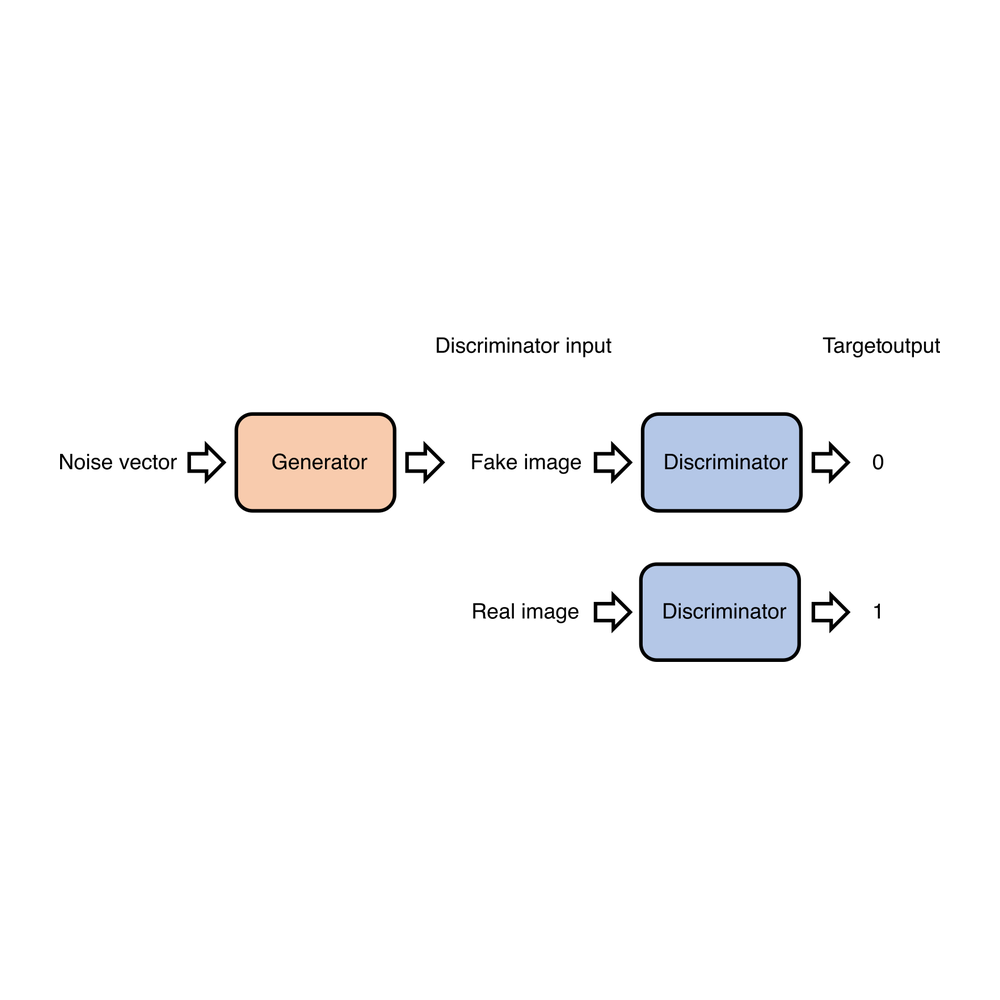

2014 · generative adversarial

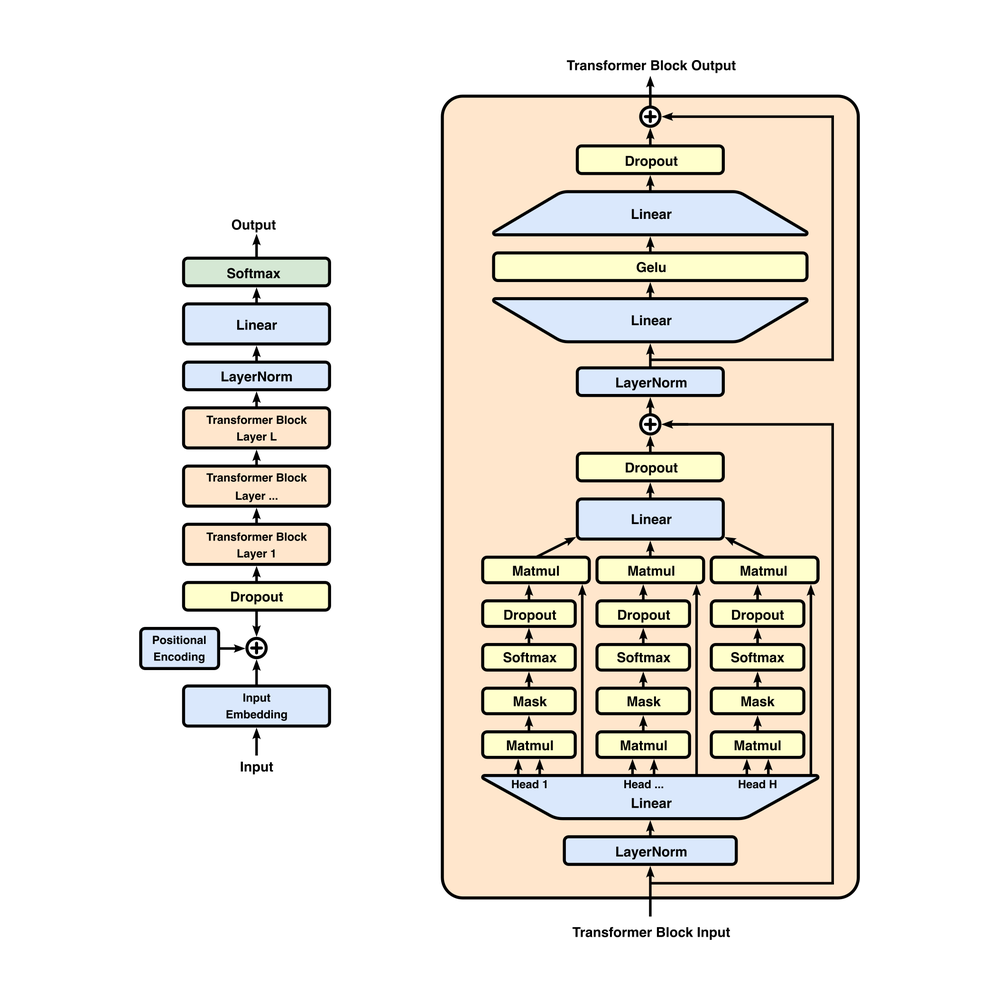

The only variants left are attention groupings: MHA → MQA → GQA → MLA

Architecture diagrams from Wikimedia Commons (AlexNet · ResNet residual block · BERT · LSTM · U-Net · GAN · Full GPT Architecture), CC BY-SA 4.0 / CC0 Public Domain.

Challengers didn’t appear out of thin air; behind them is a structural shift that’s often missed: model architectures are converging. The CNN era was Cambrian — AlexNet / VGG / ResNet / Inception / MobileNet / EfficientNet — every task had its own “best architecture,” hardware didn’t know which one to optimize for, and general-purpose GPUs swept the field. The Transformer era is the opposite: from the 2017 original paper to the frontier models of 2026, the skeleton has barely changed — Pre-LayerNorm, RoPE, RMSNorm, SwiGLU, residual, KV cache are all years-old techniques.

Today’s “innovation” is mostly down to a few attention-grouping variants (MHA → MQA → GQA → MLA), with GQA already the de-facto default for open-source large models; challengers like Mamba / SSM all end up appearing as “hybrid architectures” rather than replacements for Transformer — a NeurIPS 2025 paper even proved that Mamba and Transformer are computationally equivalent class. This is unprecedented stability in ML history. Its significance matches x86’s victory: once the architecture converges, the target for hardware optimization is fixed for the first time.

Hardware-design freedom unlocked by convergence

- Operators can be fully fixed. From hundreds of operators down to a handful of core ones (MatMul, Softmax, RoPE, LayerNorm) — every non-essential circuit can be cut. The price of GPU generality is millions of transistors wasted on paths an LLM never calls.

- Dataflow can be scheduled at compile time. The Transformer compute graph is statically regular, so it doesn’t need a GPU’s complex dynamic scheduling — GPUs spend a huge fraction of transistors on warp schedulers and memory coalescing logic that adapts to “unknown workloads.”

- Memory hierarchy can be tailor-made. Parameter sizes, KV-cache sizes, and activation sizes are all known in advance. A challenger can design SRAM/HBM/DRAM hierarchies directly for “7B model + 32K context” rather than provision buffers for “any workload” the way a GPU must.

- Numerical precision can be aggressive. int4 / int8 are now the inference norm; FP4 / FP6 are research hot topics. GPUs still need to keep FP32 / FP64 circuits to serve the scientific-computing market — completely redundant for LLMs.

- The extreme: weights etched directly into silicon. Taalas pushes “architecture convergence” to its logical endpoint — etching the 32 layers of Llama 3.1 8B weights as physical transistors directly onto the chip. The chip is the model itself, immutable, but the claim is 1000× better perf/watt than a GPU cluster. See §03.

The moat wasn’t breached, it was bypassed

The premise of CUDA’s depth advantage was “you need to support hundreds of operators” — once models converge, that premise vanishes.

Analogy: Office’s moat used to be its thousands of feature components, but today everyone writes documents in Notion using fewer than 50 of those features — the moat wasn’t breached, it was bypassed.

This is why so many AI chip startups suddenly emerged in 2024–2026: it isn’t that capital suddenly appeared, it’s that the design target became stable for the first time, and the win-rate on those bets was raised structurally. Layer on the proliferation of AI coding tools like Claude Code / Codex, and the cost structure of software migration is being rewritten — CUDA’s moat is being eroded on two fronts at once.

§03 · Train/infer split — two markets, two logics

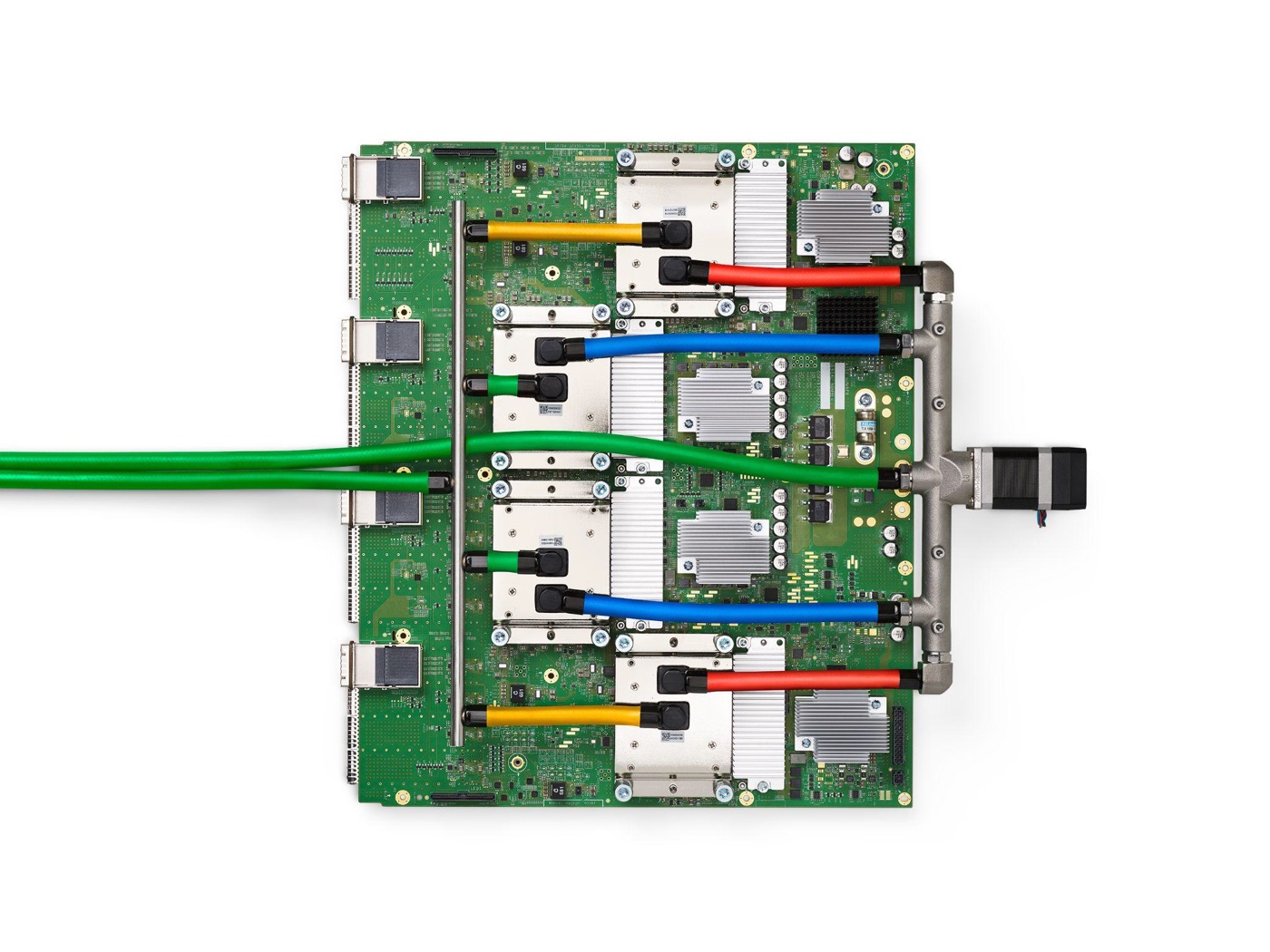

Image: Wikimedia Commons / Google · CC BY 4.0.

Mixing training and inference together leads to a serious misjudgment of NVIDIA’s true position. The compute allocation is flipping dramatically: 2023 train:infer ≈ 2:1, 2025 1:1, 2026 1:2, 2029 close to 1:4. A Lenovo executive at CES 2026 said outright that “future training will be 20%, inference 80%”; Jensen himself has admitted the inference market will eventually be “about a billion times larger” than training. Inference accounts for 80–90% of the total cost across an AI system’s lifecycle; training is just 10–20%.

The implication: NVIDIA’s truly profitable market (training) is becoming relatively small; the truly enormous market (inference) is where its moat is thinnest.

Training vs inference · the share scissors

2025 is the watershed — inference exceeds training for the first time. By 2029, training is just ~20%, inference ~80%. NVIDIA’s dominance in training is unsolvable in the short term, but every workload feature in inference cuts against it.

Training market: a fortress, NVIDIA short-term unsolvable

- Compute is highly concentrated. A single training run coordinates tens of thousands of cards; the demands on NVLink / InfiniBand are extreme; non-GPU paths lack the full ecosystem at this layer.

- Customers are highly concentrated. Fewer than 10 players globally do frontier pre-training — OpenAI, Anthropic, xAI, Google, Meta, Microsoft, ByteDance, Mistral, DeepSeek, Cohere — short decision paths, all on a direct line to Jensen.

- Failure cost is enormous. One training run is hundreds of millions of dollars and half a year. Nobody wants to gamble on immature hardware — “nobody got fired for buying NVIDIA” is the 2026 version of “nobody got fired for buying IBM.”

- The software stack is heaviest here. CUDA + NCCL + cuDNN + Megatron + DeepSpeed + a forest of fused kernels — the migration cost is huge. Anthropic took more than a year to migrate training workloads to TPU.

- Model architecture is still moving on the training side. MoE, long context, test-time scaling, sparse activation, mixed-precision training — training still needs hardware flexibility, the last comfort zone for GPU generality.

- Conclusion. NVIDIA likely still holds 80%+ share of the training market in 2028. Google TPU is the only credible training-capable rival but is opened externally only to a handful of customers (Anthropic + Apple AFM training etc.) — not a marketized competition.

Inference market: the soft spot, every feature cuts against NVIDIA

- Can be deployed in a distributed way. A single card or small cluster suffices; the NVLink advantage is irrelevant. Cerebras / Groq / each big cloud’s bespoke ASIC all stand up here.

- Compute pattern is highly regular. The same attention + FFN call repeated millions of times — perfectly suited to ASICs. GPU generality is pure waste here.

- Low precision is enough. int8 / int4 will run; FP4 is experimentally usable. The FP32 / FP64 circuits a GPU keeps are silicon spent for nothing.

- Memory wall matters more than compute wall. This is the natural advantage of alternative architectures (wafer-scale Cerebras, in-memory computing D-Matrix, near-memory compute Etched / MatX) — they were designed for “memory bandwidth as performance,” GPUs were not.

- Customers are dispersed and cost-sensitive. They look at tokens / dollar and tokens / watt, not peak compute. Google TPU offers 4.7× cost-performance and 67% lower power on inference — Anthropic, Meta, and Midjourney have already migrated some inference workloads.

- Models have converged. Hardware can be optimized end-to-end for the now-fixed Transformer (see §02).

“The belief that GPUs are the only answer was NVIDIA’s biggest moat — and that belief is now collapsing.”

— Andrew Feldman, Cerebras founder · 2026

The extreme path · Taalas: model labs may directly become hardware players

- Technical principle · weights = physical transistors. The 32 layers of Llama 3.1’s weights are etched directly onto the chip as physical transistors. No HBM, no need to load weights from memory. An input vector arrives, electrical signals flow through the physical transistors corresponding to each layer, the output flows to the next layer, until a token is generated. The whole chip = that model, immutable.

- Performance · 17,000 tok/s · 1000× better perf/watt than a GPU cluster. 17,000 tokens per second on Llama 3.1 8B; one 250W air-cooled card matches an entire GPU cluster. Taalas uses an automated design flow that compresses the cycle from weights to silicon to 2 months, requiring only the top metal mask change — with the architecture stable for 7–8 years, depreciation risk drops dramatically.

- If it works · model lab → hardware player. OpenAI sells GPT-Edition inference cards, Anthropic sells Claude-Edition inference boxes, bypassing both cloud vendors and NVIDIA. Enterprise deployment shifts from “buy GPU + download weights + configure CUDA” to “buy a box, plug it in.” Privacy and IP get solved at the same time — the weights physically live in the customer’s machine room, and extracting them would be reverse-engineering a chip. OpenAI’s $20B Cerebras commitment + the Broadcom partnership for a bespoke inference chip are early signals of exactly this logic.

Challenger landscape (2026)

Funding / customers / roadmap basis · sources Fortune, CNBC, Digitimes, TrendForce (2026 Q1).

| Category | Player | Path / advantage | Customer / status | Key number |

|---|---|---|---|---|

| Big-co in-house | Google · TPU | ASIC · dual-stack train+infer | Internal + Anthropic + Apple AFM training | v4/v5p · 4.7× inference CP |

| Big-co in-house | Amazon · Trainium / Inferentia | ASIC · primarily inference | Anthropic · AWS internal | Trainium2 ramping |

| Big-co in-house | Microsoft · Maia | ASIC · own-cloud inference | Azure internal + part of OpenAI | In production from 2024 |

| Big-co in-house | Meta · MTIA | ASIC · recommendations + LLM inference | Meta internal | MTIA v2 deployed |

| Bespoke vs GPU scissors | TrendForce 2026 shipment growth | ASIC +44.6% vs GPU +16.1% | Structural · not cyclical | 2027 ASIC > 15% of data centers |

| Independents | Cerebras · WSE | Wafer-scale · in-package | OpenAI reported $20B procurement · in IPO | Mid-May IPO valuation $30B+ |

| Independents | Groq · LPU | Low-latency token-batch | Already absorbed by NVIDIA $20B IP / team (2025-12) | The acquisition itself proves the threat |

| Independents | D-Matrix | In-memory compute | Microsoft backing | $5B-class raise in 2026 |

| Independents | SambaNova | Reconfigurable dataflow | Intel reportedly signed term sheet to acquire | Acquisition pending |

| Independents | Etched / MatX / Ayar Labs | Near-memory / optical interconnect | $5B-class raises each in 2026 | Silicon-photonics trend |

| Independents | Furiosa / Positron / Taalas | Korea + US new entrants · model burned to silicon | Taalas: Llama 3.1 8B @ 17K tok/s | 1000× perf/watt |

| Geography · China | Huawei Ascend · Alibaba PPU · Cambricon · Biren | Forced to maturity by export controls | Chinese AI-chip market ~$50B/yr (Jensen estimate) | 2025 domestic substitution accelerating |

| Geography · Europe | Fractile · Axelera · Euclyd · Optalysys | Photonic / analog computing | Early stage | Longer horizon |

| Total startup raise | 2026 global AI-chip startups | Capital has bet “NVIDIA can’t win forever” | 2026 cumulative raise | $8.3B |

Sources: Fortune (2026-01), CNBC (2026-04-17), Digitimes, TrendForce 2026 Q1. Cerebras mid-May IPO is publicly disclosed; the OpenAI-Cerebras $20B procurement intent is from media reports. NVIDIA itself has invested $4B as a hedge (photonic computing, analog / physical computing).

§04 · Political risk — independent variable

Image: Wikimedia Commons / Public Domain.

NVIDIA’s political risk is broadly underestimated. On Dwarkesh Patel’s podcast (2026-04-15), Jensen repeatedly delivered “loyalty oath” style remarks and got into an argument with the host: pressed on the national-security risk of selling advanced chips into China, he lost composure and shot back “You’re not talking to somebody who woke up a loser”. Critics see him as repeatedly sidestepping direct answers on national security, emphasizing only “selling more US technology is good,” which is read as overly mercenary. Earlier on the Bg2 podcast he called the “China hawk” label “a badge of shame”, which commentary articles framed as “auditioning for a Global Times editorial.”

The structural difference vs. Apple and Tesla

- Apple · supply-chain exposure · contributing to China. Apple’s China exposure is manufacturing supply chain + part of sales — India / Vietnam are diversifying it. Cash flow direction: China manufacturing → Apple procurement → supply-chain workers benefit. Easy to frame as “contributing to China.” Apple carries universal-values DNA; its posture before Congress is friendly.

- Tesla · market + factory · industrial capability contribution. Tesla’s Shanghai factory is the model for China’s EV supply chain; Cybertruck / Model Y get sold to Chinese consumers. Musk carries MAGA DNA; at most this is internal Republican-vs-Democrat skirmishing, not the external “pro-CCP, treason-adjacent” risk profile.

- NVIDIA · selling to China · strategic materiel · suspicion of treasonous trade. NVIDIA is “selling to China”, and it’s selling something officially defined as “militarily critical technology” — a categorical, not a quantitative, distinction. Layer on Jensen’s ethnic Chinese background + repeated framings being read as “overly mercenary,” and US grassroots do not naturally trust an ethnic-Chinese tech titan perceived as pro-CCP. Members of Congress will inevitably cater to that demand.

Decoupling script · passive, not active

“Public support for Taiwan independence + criticism of CCP” as a flagship event is low-probability; the more likely path is being passively pushed out.

Jensen’s instinct is to dodge, in the manner of a businessman — after saying Taiwan was a country in 2024, he proactively walked it back the next day with “not a geopolitical statement,” seeking to defuse rather than to take a political side. So the more likely decoupling script is:

- Congress pushes tighter export controls — the next generation after H20 keeps getting blocked; Sovereign AI legislation lands

- CFIUS intervenes in NVIDIA’s China collaborations / customer mix

- The board comes under pressure to adjust China-related disclosures and launch a strategic review

- Eventual forced exit from the China market — 25% data-center revenue line goes to zero

- The result is similar but the posture is different — not a heroic divorce, a compliance push.

So NVIDIA gets dealt with politically before Apple / Tesla do. This is the second acceleration curve, independent of the challenger structure.

§05 · Timing & signals — when & how to watch

Image: Wikimedia Commons / 2017 · CC BY-SA 4.0.

This is the easiest place in the whole analysis to be wrong. NVIDIA’s decline is almost certain, but there’s a long road between “almost certain” and “starts next year”. Supply-chain lock-in (HBM, CoWoS, ODM full-rack capacity booked years out) + training-side software inertia + large customers’ unwillingness to switch in one go — those three buffers are thick. The bear case’s difficulty isn’t direction; it’s timing.

The pie is still growing: the four big clouds’ combined 2026 capex approaches $600B; Goldman Sachs projects $1.15T total for 2025–2027. Even if all challengers land on time, NVIDIA’s AI data-center share in 2028 is likely still above 60% — share down, absolute revenue may still grow.

Three-stage rhythm

- Phase 1 · 2026–2027 · still winning on the surface. Total revenue still growing, gross margin held at 70–75%. Cloud-vendor in-house ASICs take 10–15% share, but training-market expansion offsets the inference decline. On the surface NVIDIA looks unscathed — this is the easiest phase to be fooled by.

- Phase 2 · 2027–2028 · the real inflection window. Inference market exceeds training in absolute size, the inference leak begins to outweigh training growth, total share drops visibly, and gross margin shifts down toward 65–70%. This is the real inflection window — non-GAAP gross margin breaking 70% is the structural signal.

- Phase 3 · post-2028 · the fortress shakes. Google TPU, the AMD MI series, and possibly OpenAI’s bespoke chip make a substantive breach in training; if the “model burned into hardware” path works, the entire inference-hardware market gets reshuffled, and gross margin reverts toward below 60%. GPUs go from “allocated good” back to “commodity.”

Non-GAAP gross margin · three-stage trajectory

Reference: in 2022 the gaming-GPU cycle saw gross margin fall from 64% to 56% (over a year) — a benchmark for the speed of cyclical supply-demand reversal. A structural inflection will be slower but harder to recover. The real alarm is non-GAAP gross margin breaking 70% without recovery.

Primary alerts · track non-GAAP gross margin against three thresholds

- Tier-1 alert · margin < 70% · challengers bargaining substantively. This is a structural signal, not a cyclical fluctuation. It means large customers are negotiating prices using alternative options; the 35% Blackwell Ultra premium model becomes hard to sustain.

- Tier-2 alert · margin < 65% · pricing-power-loss spiral. Scale economics can no longer save it. Confirmed by 2–3 quarters of consecutive QoQ decline.

- Tier-3 alert · B-series / Rubin price cuts to clear inventory · GPU has reverted to commodity. From “allocated good” back to “commodity.” Once price cuts to clear inventory appear, the structural supply-demand reversal is complete.

Auxiliary signals

- Top cloud vendors’ bespoke-ASIC share. Whether “bespoke ASIC share of capex” breaks 25%. Google + Amazon combined are around ~15% today; watch 2027 H2 data.

- Pricing pressure on inference product lines. Pricing pressure on inference-optimized variants like B200 NVL will loosen earlier than on training flagships.

- Actual shipments of model-lab non-GPU inference hardware. Deals like OpenAI-Cerebras $20B need to be measured by actual delivery, not announcements; the tape-out cadence of OpenAI’s bespoke chip (Broadcom partnership) is a key milestone.

- Cerebras post-IPO valuation. Public-market pricing of the alternative path. If post-IPO valuation holds at $30B+, public markets are endorsing the challenger narrative; if it breaks below $15B, the market still believes in the NVIDIA monopoly.

- Actual China-market revenue scale. The lagging indicator of geopolitical progress. If export controls tighten further and revenue share falls from 25% to below 5% — this is the political script paying out.

- Sovereign AI–type legislation. Any US Congressional legislation requiring “pre-shipment review for sensitive compute” is an early signal that the political script is arriving.

§06 · Bottom line — the inflection signal

Image: Wikimedia Commons / CC BY-SA 4.0.

The whole logic loops cleanly.

Six structural observations

- Architecture convergence unlocks hardware design freedom. Transformer is the new x86; CUDA wasn’t breached, it was bypassed.

- The challenger landscape has formed. Big-co ASICs + first-tier startups + China domestic substitution + model labs burning hardware — 2026 global startups have raised $8.3B.

- Training fortress holds, inference soft spot exposed. 2025 is the watershed; 2029 train:infer 1:4. Every inference workload feature cuts against NVIDIA.

- Model labs may step into hardware. OpenAI’s $20B Cerebras commitment + Broadcom bespoke inference chip + Taalas burning hardware — the entire AI value chain may be re-sliced.

- Political risk accelerates the process. NVDA’s China exposure is structurally different from AAPL / TSLA — “selling strategic materiel” vs. “contributing to the supply chain”; Jensen’s ethnic-Chinese background + repeated framings being read as “overly mercenary” mean that, in the US Congressional demand-supply, NVDA logically must be dealt with before Apple or Tesla.

- The time window is longer than intuition suggests. HBM / CoWoS / ODM lock-in + training software inertia + large customers unwilling to switch in one go — these three buffers are thick. The bear case’s difficulty isn’t direction, it’s timing.

Bottom line

Dominance still holds, and holds strongly. But beyond the moat, challengers have already shifted from “chasing” to “routing around.”

What you really need to watch isn’t “who’s catching up” — it’s “whether NVIDIA’s own inflection signal is firing.” Non-GAAP gross margin is that signal.

Tracking rhythm:

- Margin 75% → 70% (Tier-1 alert)

- Margin 70% → 65% (Tier-2 alert)

- B / Rubin price cuts to clear inventory (Tier-3 alert)

- Cloud-vendor bespoke-ASIC share crossing 25%

- OpenAI-Cerebras $20B actually delivered

- Sovereign AI / export-control escalation

As long as margin doesn’t break 70%, the monopoly structure still holds; the moment it breaks 70% and can’t recover, the inflection signal has fired.

Apple’s true edge in the AI era isn’t “we can also build models” — it’s “we don’t need to build models” (see /signals/apple-ai). NVIDIA’s true danger isn’t “someone is chasing” — it’s “it can’t slow down” — B2B customer rationality accelerates the process in reverse. These are the two faces of the same structural observation.

— Contrarian research · 2026-04-26